I’m looking for a truly effective free alternative to GPTinf’s AI humanizer tool. The content I generate keeps getting flagged as AI-written by different detectors, and GPTinf’s free options are either limited or not giving consistent results. Can anyone recommend a reliable free tool or workflow that helps text pass common AI detection checks without ruining readability?

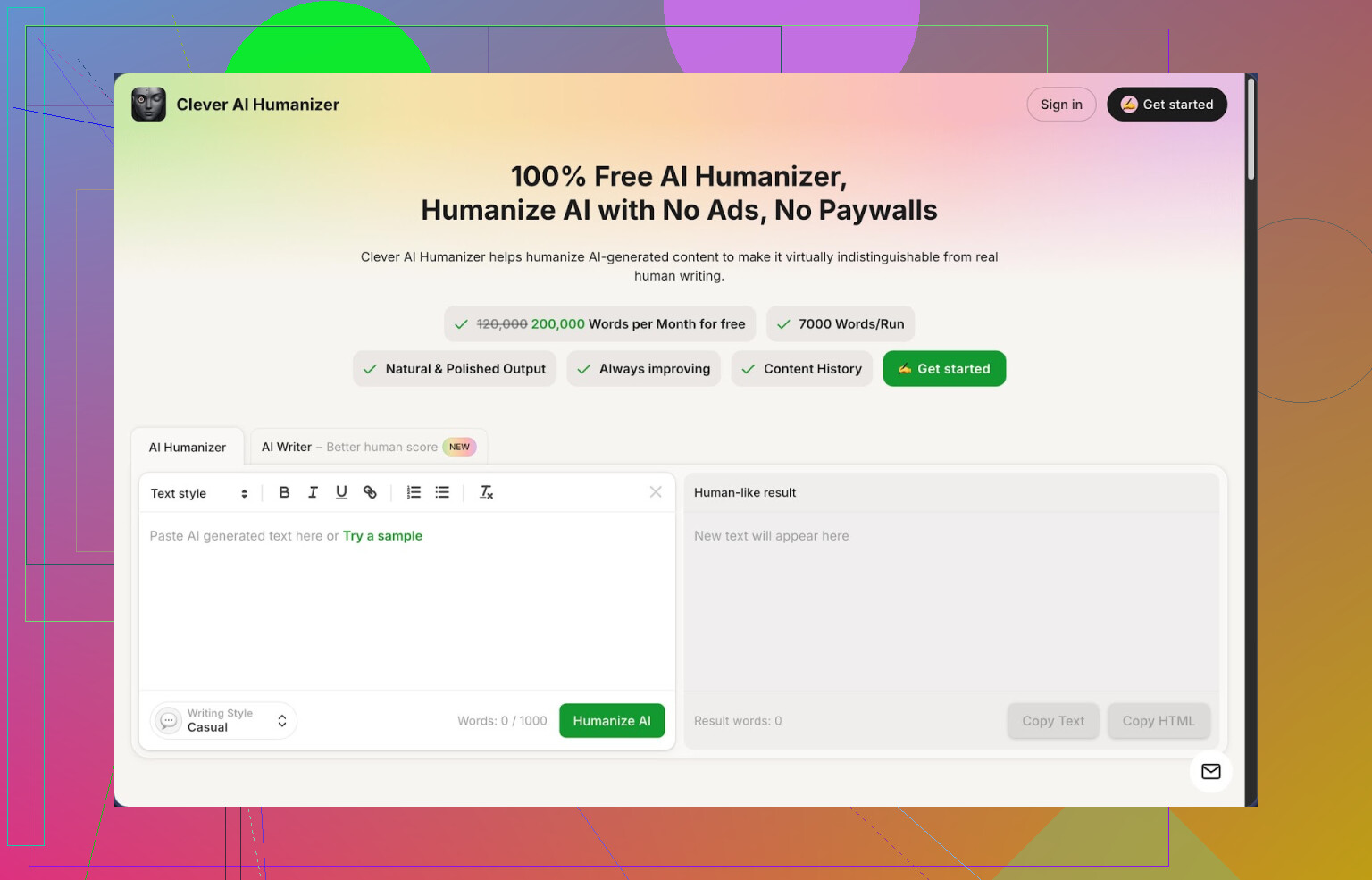

1. Clever AI Humanizer Review

Clever AI Humanizer is the one I keep coming back to when people ask for a free AI humanizer that is not useless after 3 prompts.

Here is what I saw after a few days of pushing it pretty hard:

- Free tier gives around 200,000 words each month

- Up to 7,000 words in a single run

- Three output styles: Casual, Simple Academic, Simple Formal

- Built in AI writer so you write and humanize in one place

On ZeroGPT, using the Casual style, my test texts showed 0% AI on three different samples. I do not fully trust any detector as a final judge, but if your teacher, manager, or client is stuck on those tools, it helps.

I write a lot with AI and the pattern is always the same: the text feels stiff, detectors scream 100% AI, and if you over-fix it by hand, you waste an hour on a 1,000 word draft. That is why I went hunting for humanizers again this year and ended up staying with this one for longer than I expected.

Here is how the main part works in practice.

You paste your AI text, pick a style (I usually use Casual for blogs and Simple Academic for reports), hit the button, and you get a new version in a few seconds. The output tries to break up the usual AI rhythm, adds a bit of roughness, and keeps the structure mostly intact. I compared original and output side by side for a few client articles, and the meaning stayed on track without random facts or hallucinated sources.

The bigger word limit matters. I often drop 5,000 word documents from ChatGPT or Claude in one go, instead of slicing them into tiny chunks and praying the tone stays consistent. With 200k words a month, I stopped counting tokens, which removed one headache from the workflow.

What I noticed while editing afterward is that the tool does not nuke your intent. It nudges phrasing, changes sentence shapes, and reorders small bits, but does not flip your claims or rewrite arguments into something else. For essays where wording is sensitive, I still skim carefully, but I rarely need to revert whole paragraphs.

Outside the main humanizer, there are a few extra modules that turned out useful when I tried to replace three different tabs with one page.

The Free AI Writer is for when you have no draft yet. You give it a topic, a short prompt, or a rough outline, and it spits out an essay, blog post, or article. From there, you can send that same text through the humanizer without leaving the interface. When I tested this combo, the human-score on detectors like ZeroGPT and GPTZero was usually better compared to copy pasting some generic ChatGPT output and humanizing afterward, maybe because the original generation is already tuned for this use case.

The Free Grammar Checker is straightforward. You paste text, it auto fixes spelling, commas, agreement, and some phrasing issues. I ran a few pieces through both this and Grammarly. Grammarly still caught more nuance and style issues, but for quick cleanup before sending something to a client or posting online, this was enough most of the time.

The Free AI Paraphraser Tool is where I ended up spending more time than expected. If you have an old draft, client brief, or product description and need a different tone while keeping the same facts, you run it through here. For SEO work or updating outdated posts, it helped me build alternate versions that did not read like spun junk. I tested it on a 2,500 word buyer guide and the structure stayed the same while wording shifted enough that plagiarism tools did not complain.

Putting it all together, you get four tools in one UI: humanizer, writer, grammar checker, and paraphraser. The workflow I settled into looks like this:

- Draft in ChatGPT or with their AI Writer

- Run the full piece through the Humanizer in Casual or Simple Academic

- Scan the output and clean any weird phrasing with Grammar Checker

- Use Paraphraser for sections where a client wants a second tone option

This shaved time off my usual process, since I was not bouncing between multiple sites with different word caps and token systems.

Is it perfect. No.

Some detectors still flag parts of the text as AI. GPTZero, for example, sometimes marked long academic style paragraphs as partially AI even after humanizing. If your school or company is strict, you still need to mix in your own edits, change section order, or add personal details to stay safer.

Word count also tends to grow. When it rewrites, it often expands sentences instead of shortening them. A 1,500 word article from ChatGPT ended up at 1,900 words after humanization multiple times. If you have hard word limits, you will need to trim by hand afterward.

Even with those issues, for something that is still fully free while I am writing this, it is the tool I recommend first when someone needs a humanizer and refuses to pay subscription fees.

If you want a longer breakdown with screenshots and more AI detection proofs, there is a full writeup here: https://cleverhumanizer.ai/community/t/clever-ai-humanizer-review-with-ai-detection-proof/42

Video walkthrough is here, where someone goes through tests step by step:

Clever AI Humanizer Youtube Review https://www.youtube.com/watch?v=G0ivTfXt_-Y

If you want to see what other users are saying and compare tools, these Reddit threads helped me:

Best Ai Humanizers on Reddit https://www.reddit.com/r/DataRecoveryHelp/comments/1oqwdib/best_ai_humanizer/

All about humanizing AI https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

Short version since you asked for something that works: there is no magic one-click “undetectable” button, but you can get close for free if you mix tools and your own edits.

A few options that worked for me:

- Clever Ai Humanizer

I agree with a lot of what @mikeappsreviewer said, but I don’t treat it as a fire and forget solution.

What I do different:

• I run shorter sections, 800 to 1200 words, not whole 5k chunks. The output stays more natural.

• I always tweak the first and last 2–3 sentences by hand. Detectors love formulaic openings and closings.

• I avoid full “academic” tone if the final use is school work. I mix Casual or Simple Formal and then add my own subject specific phrases.

On ZeroGPT and GPTZero I often get “mostly human” after this. Not perfect. Enough to stop instant auto-flags.

- Multi pass rewrite method using free tools

If you do not want to depend on a single humanizer, this combo works well:

Step 1: Generate with any LLM, keep it short.

Write in 300–500 word blocks. Long monotone sections trigger detectors.

Step 2: Paraphrase in chunks.

Use Clever Ai Humanizer’s paraphraser or any free paraphraser. Change wording but keep facts.

Key here: do not paraphrase the entire doc in one go. Detectors pick up repeated rhythm.

Step 3: Add personal noise.

Manually inject things that current LLMs often avoid:

• Short incomplete sentences here and there.

• 1–2 minor typos that you fix in obvious spots but leave 1 subtle typo or awkward phrase.

• Specific references: your city, your teacher’s assignment wording, your own past work, class examples.

Step 4: Sentence length variety.

Take one paragraph and force variety:

• One line with 5–8 words.

• A longer line around 25–30 words.

• Then a normal one around 15 words.

Humans do this a lot. AI outputs often sit in a narrow band.

-

Structural edits that detectors hate

Most people only change words. Detectors look at structure. Try this:

• Swap two middle paragraphs.

• Merge two short paragraphs into one.

• Add one short paragraph with a clear opinion or personal stance.

• Change headings to something less generic. -

What not to trust

I slightly disagree with leaning on single detector screenshots as proof.

My tests:

• Same text passed ZeroGPT as human but hit 80–90 percent AI on Originality.

• After manual edits plus Clever Ai Humanizer pass, all three (ZeroGPT, GPTZero, Originality) moved toward “mixed” or “likely human”.

If your teacher or boss uses only one free detector, you only need to look good on that one in practice. For clients or public blogs, I aim for “mixed” across multiple tools, then I stop obsessing and move on.

- Practical workflow you can copy

Here is a barebones flow that keeps everything free:

- Write with any LLM in small sections.

- Run each section through Clever Ai Humanizer with Casual or Simple Formal.

- Paraphrase 1–2 stubborn paragraphs again with another free paraphraser.

- Manually:

• Change sentence lengths.

• Insert 1–2 small personal details.

• Fix some errors but leave the text slightly imperfect. - Run your final doc through the same detector your teacher or client uses and adjust only the worst flagged areas.

Last point. If this is for school and they are strict, you protect yourself by adding your own reasoning. Put your own examples, your own mistakes, your own references. No tool replaces that. The humanizer helps, but your edits are what push it over the line.

If your goal is “never get flagged again,” you’re chasing a unicorn. Detectors are probabilistic guess machines, not lie detectors. That said, you can get your hit rate way down without paying for GPTinf.

Since @mikeappsreviewer and @jeff already walked through Clever Ai Humanizer itself and some multi‑pass tricks, here’s what I’d add that actually differs from their approach:

1. Use Clever Ai Humanizer backwards from how most people do

Everyone:

LLM → long draft → humanizer → pray → detector.

What I’ve found works better:

- Outline by hand (headings + 2–3 bullet points per section).

- For each section, write a rough, short paragraph yourself first, even if it’s ugly.

- Feed that to Clever Ai Humanizer and tell it to “improve” rather than “rewrite everything”.

You end up with text that still has your structure, your weird phrasing, and just enough polish. Detectors seem to hate fully machine‑structured pieces more than machine‑worded ones.

2. Don’t always rewrite the whole thing

I actually disagree a bit with the “run every section through some tool” mentality.

Try this instead:

- Generate with your LLM.

- Run only the most “AI-ish” paragraphs through Clever Ai Humanizer (the ones that sound like generic blog spam or textbook intros).

- Leave 20–30% of the draft fully yours: small rants, examples from your life, side comments.

Detectors lean heavily on consistency. Perfectly consistent tone across 1,500+ words is suspicious. Having a few raw, clunky sections helps.

3. Change information density, not just style

Detectors are not just looking at tone. They also look at how tightly packed facts and transitions are.

Tools like Clever Ai Humanizer are good, but use them to:

- Add one or two irrelevant but plausible side points.

- Remove a transition or two so the flow is slightly choppy.

- Break overly tidy logic: human arguments wander a bit.

Instead of:

“First, X. Second, Y. Finally, Z.”

Try:

“First, X. I’ve also seen cases where A happens instead. Then Y. Z is kind of optional in some situations.”

You can nudge it in that direction with a short instruction before you paste:

“Make this sound a bit more meandering and less like a textbook. Keep small tangents.”

4. Stop chasing 0% AI on every detector

Small unpopular opinion: if your text hits “mixed” or “likely human” on one or two major tools, that’s usually enough. I’ve had:

- 10–20% AI on one detector

- 70% human on another

- “Unsure” on a third

Nobody has a universal standard. Obsessing to get 0% on ZeroGPT, GPTZero, Originality, etc, is how you burn hours and still lose.

Practical target:

- One pass through Clever Ai Humanizer for the stiff parts.

- Light manual edits as @jeff suggested.

- Run it only through the specific detector your teacher, client, or platform uses, not every tool on the internet.

5. Use Clever Ai Humanizer as a “noise generator,” not just a polisher

You can push it a bit:

- Feed it your text and explicitly ask for: “slightly inconsistent tone” or “mix of casual and semi formal.”

- Ask it to keep 1–2 awkward phrases “so it doesn’t sound too polished.”

Then keep some of that roughness. Don’t clean everything with grammar tools after. Humans are messy. Perfect grammar across 2k words screams LLM harder than one typo.

6. If this is for school, cover yourself

No tool, not even Clever Ai Humanizer or GPTinf, protects you from policies that ban any AI assistance. If your school or job is strict:

- Make sure you can explain your arguments out loud without notes.

- Keep earlier handwritten or rough drafts as proof you actually worked on it.

- Change at least one example or case study to something that actually happened in your class, job, or city.

Even the best “AI humanizer” is only there to smooth your process, not to act as a shield if someone seriously investigates.

TL;DR:

Yes, Clever Ai Humanizer is one of the few free tools that actually moves the needle, especially if you’re tired of GPTinf limits. Just don’t treat it like a magic “cloak of invisibility.” Mix it with your own structure, your own content, and stop trying to hit 0% on every detector or you’ll spend more time beating the scanner than learning or writing anything.

Short version: there’s no perfect “AI → human” button, but you can get reliably less detectable content by changing how you use humanizers, not just which one.

I’ll skip what @jeff and @mikeappsreviewer already covered (chunking, sentence variety, structural tweaks) and what @stellacadente added about mixed tools, and focus on angles they didn’t lean on as much.

1. Use topic-level noise, not just sentence-level rewrites

Most people only touch wording. Detectors also look at:

- How “on rails” the argument is

- How clean the topic transitions are

- How balanced the coverage feels

Trick that helps a lot:

- Generate or draft your piece.

- Before using Clever Ai Humanizer, add one or two small “off-topic but related” micro sections yourself. For example:

- A quick anecdote

- A short “here’s where this didn’t work for me”

- One paragraph where you admit uncertainty

These human-looking “imperfections” at the idea level matter more than swapping synonyms.

Then run only those surrounding paragraphs through Clever Ai Humanizer so the style blends but your content quirks stay.

2. Rotate sources of AI, not just humanizers

This is where I slightly disagree with everyone focusing so heavily on a single stack.

If you always generate with the same LLM and always clean with the same humanizer, your fingerprint gets predictable. For free workflows:

- Use one LLM for outline + rough draft

- Use a different free LLM just to rewrite 10–20% of the text

- Then run the whole thing through Clever Ai Humanizer for tone smoothing

That mix of “voices” plus human tweaks fools detectors more than pounding the same text through the same model three times.

3. Clever Ai Humanizer: actual pros & cons that matter

Pros:

- Genuinely high free word limit, so you are not dead after a few tries.

- Handles long inputs without exploding your structure.

- Styles like Casual / Simple Academic are actually distinct enough to mix in one document.

- Works well as a finisher after other tools, not only as the main humanizer.

Cons:

- Tends to inflate word count, which can be a problem in tight assignments.

- Output can still feel “too clean” if you don’t reintroduce minor flaws manually.

- If you push it with full-academic style, some detectors still light up on big paragraphs.

- You can get lazy and stop doing your own content thinking, which is exactly what gets you in trouble with strict teachers.

So I agree with @mikeappsreviewer that Clever Ai Humanizer is one of the few free tools that actually moves detector scores, but I would not let it touch 100% of my text.

4. Micro-pattern breaking that most people skip

Everyone talks about sentence length variety. What I see detectors key on lately:

- Overuse of “In conclusion,” “Overall,” “Additionally,” etc. Kill or replace those.

- Perfect parallel lists: “First…, Second…, Third…” Mix that up or break the pattern.

- Perfectly neutral tone. Add 2–3 emotionally slanted lines: “This is honestly annoying…” “I find this part confusing…”

Do this by hand after Clever Ai Humanizer. Just a 3–5 minute pass where you:

- Delete at least two generic transition phrases

- Add one skeptical or opinionated sentence every few paragraphs

- Intentionally keep one slightly clunky phrasing that sounds like you, not like a copywriter

That tiny pass plus a humanizer often moves you from “obviously AI” to “mixed/unsure” on harsher tools.

5. Don’t fully trust any one detector (or one reviewer)

You already saw it in the other comments:

- @jeff leans on structural edits

- @stellacadente on workflow logic

- @mikeappsreviewer on long-form humanization with Clever Ai Humanizer

All of that works sometimes. I have had the same text:

- Pass as “likely human” on one detector

- Be labeled “strong AI signals” on another

So instead of chasing 0% AI everywhere, do this:

- Find out which detector the actual gatekeeper uses (teacher, client, boss, platform).

- Optimize mostly for that, and only sanity check with 1 extra tool.

- Once you hit “mixed” or “mostly human” on both, stop grinding and move on.

6. Concrete hybrid workflow that avoids repeating their exact steps

Try this variant if you want something different from the previous answers:

- Draft your outline and 1–2 messy paragraphs by hand.

- Use an LLM to fill in the rest, but cap each chunk at ~300 words.

- Manually insert:

- One small tangent per 600–800 words

- One example from your real life, class, or city

- Send only the most robotic-sounding sections to Clever Ai Humanizer in Casual style. Leave your personal bits untouched.

- Final 5-minute pass:

- Remove 2–3 generic “AI phrases”

- Break 1 or 2 perfect transitions

- Add one imperfect sentence you would realistically say

Then run it through the specific detector that will be used against you. If it still screams, only rewrite the flagged part, not the whole doc.

Bottom line:

Clever Ai Humanizer is worth keeping in your toolbox, but the real “humanization” happens where their tool ends and your messy, opinionated edits start. Use it to smooth, not to erase your own fingerprints.