I recently went through a HIX bypass review and the outcome wasn’t what I expected. I’m confused about the criteria they used, what documentation actually matters, and whether there’s any way to appeal or request a reconsideration. Can anyone explain how HIX bypass reviews typically work, what common pitfalls to avoid, and what steps I should take next to improve my chances?

HIX Bypass AI Humanizer Review, tested the hard way

Short version

I tried HIX Bypass because the site splashes a “99.5% success rate” and throws in logos from Harvard, Columbia, Shopify, etc. I went in curious, not convinced. I left annoyed.

Full notes below if you want the details.

What I tested and how it went

I took two different AI-generated pieces and ran them through HIX Bypass. Then I checked the output against a few detectors:

• ZeroGPT

• GPTZero

• The built in detector inside HIX Bypass

Result:

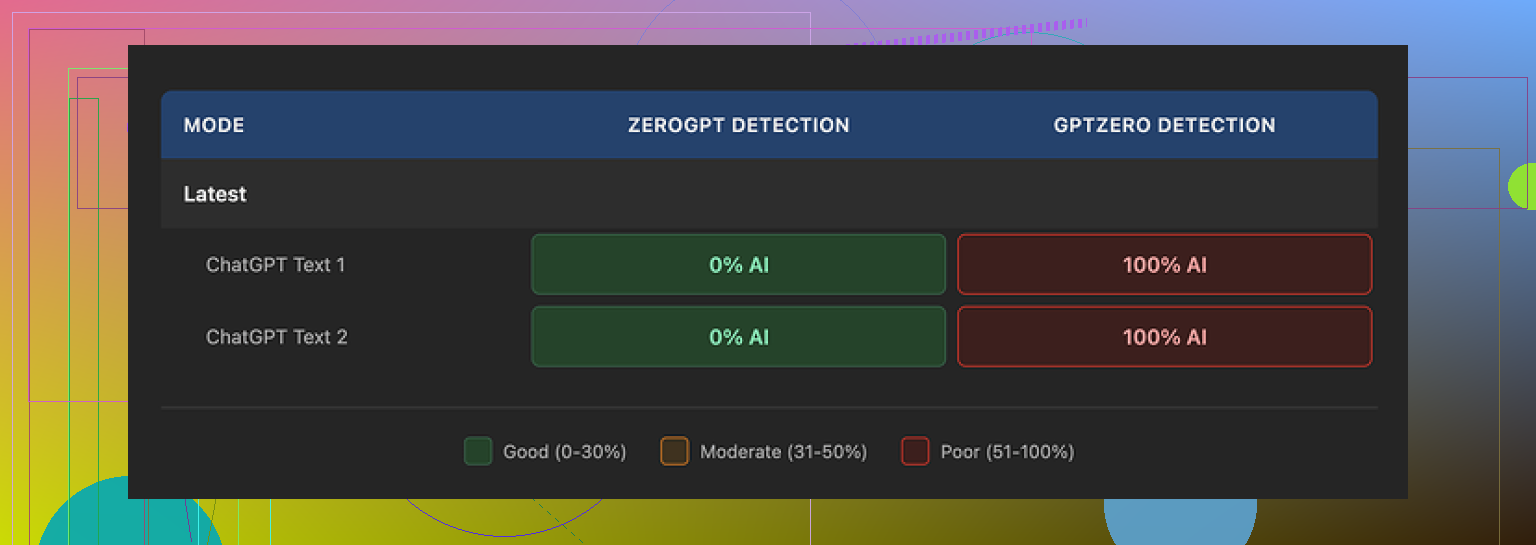

• ZeroGPT said both samples were “human” with no issues.

• GPTZero said both samples were 100% AI. Not borderline. Fully AI.

• HIX Bypass’ own integrated checker told me “Human-written” across most of its listed detectors, which did not match what GPTZero reported at all.

The page that shows the internal detection results looked like this:

So if your main target is GPTZero, my runs did not back up their 99.5% claim. ZeroGPT was fine, GPTZero was a hard fail.

Writing quality, not great

I scored the output around 4 out of 10 for actual writing quality. Here is what stood out:

• It kept em dashes all over the place, which is something a lot of detectors pay attention to.

• One sentence was flat-out broken, like the text was cut and pasted wrong.

• In one sample, the tool wrapped a whole sentence in square brackets for no reason. It looked like an editing note that never got cleaned up.

So you do not only have detection risk, you also have text that looks off to a human reader.

Limits, pricing, and refund trap

This part annoyed me more than the detection results.

Free tier

• You get around 125 words total on a free account. That is barely enough to test anything.

• On top of that, free inputs can be used to train their models, according to their terms. If you care about privacy or client content, that matters.

Paid plans and refunds

On paper, the Unlimited annual plan shows up at about $12 per year. That looks cheap until you read the fine print.

• Refund window is 3 days.

• To qualify for a refund, you have to stay under about 1,500 words used in that period.

• So if you run a few medium tests and go over, you lose refund eligibility even if the tool fails your target detectors.

It pushes you into a weird spot. To test it properly, you have to risk crossing the word limit. If it fails, you are stuck.

Terms of service issues

Going through their terms, a few things stood out to me:

• They give themselves the right to change usage limits after you paid. So “Unlimited” is not locked in.

• They grant themselves broad rights over any content you submit. I would not pipe sensitive or client-specific text through it under those terms.

Comparison with Clever AI Humanizer

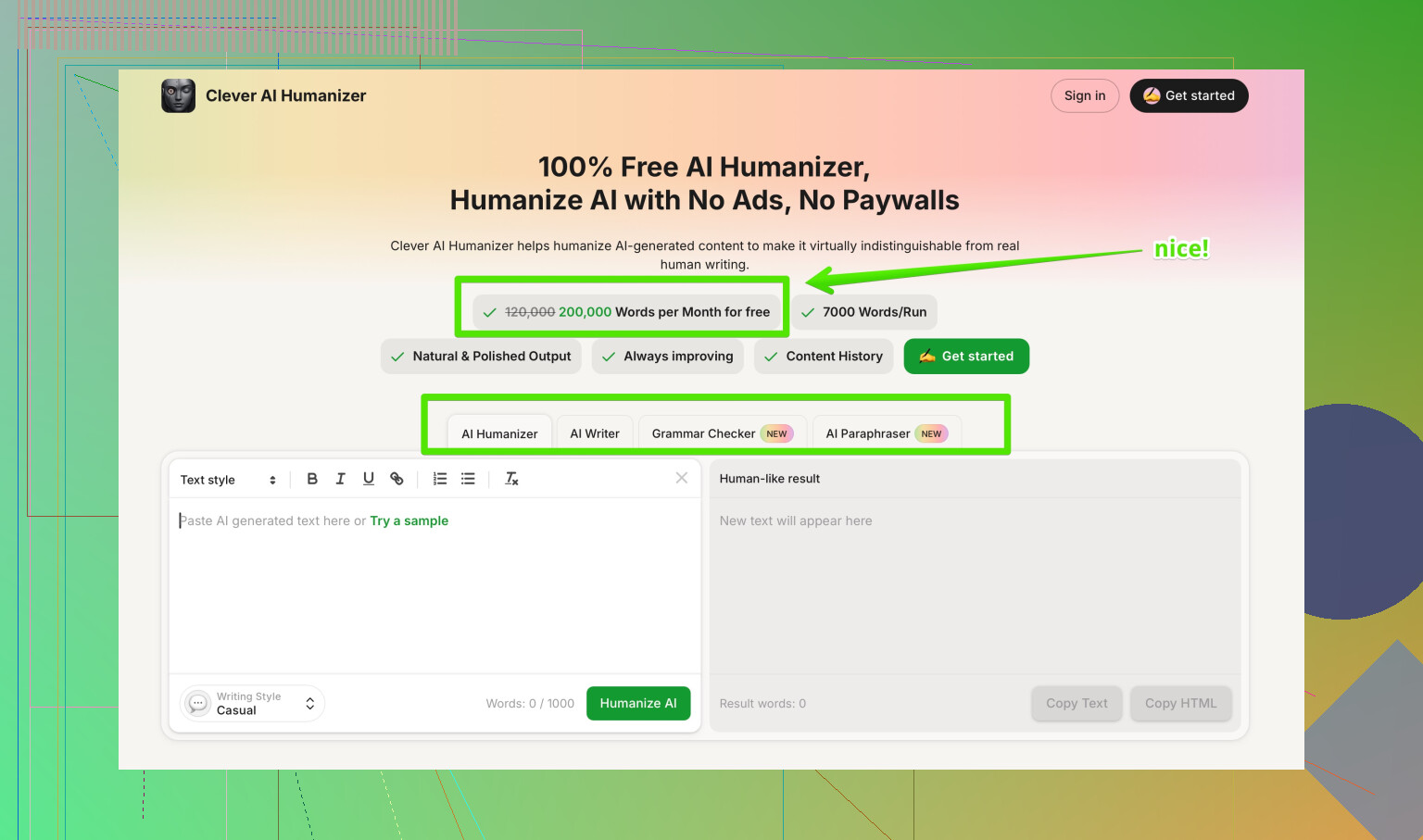

I tried multiple tools side by side. The one that did better for me was Clever AI Humanizer:

On my samples:

• Rewrites looked closer to how a real person writes. Fewer weird artifacts, no random brackets.

• Detection scores were better across several checkers.

• Cost was zero for my use, so I was not juggling word caps to stay within a refund window.

So if you are testing options and care about both readability and detector results, I would start there before paying for HIX Bypass.

When HIX Bypass might still be usable

If you only care about getting past something like ZeroGPT, keep text short, and do your own heavy editing on top, you might squeeze some use out of it. I would not trust it against GPTZero based on what I saw.

I would also avoid sending anything through it that you would not want stored or reused, given their terms.

Bottom line from my runs

• Marketing claims did not match my GPTZero results.

• Writing quality was weak and sometimes broken.

• Free tier is too small for meaningful testing.

• Refund policy plus word cap feels hostile to users.

• Terms are broad enough that I would not feed important content into it.

Clever AI Humanizer gave me cleaner output and better scores at no cost, so that is where I ended up staying.

I went through something similar with a HIX Bypass review and had the same “what did they even look at” reaction. Here is what I learned the hard way.

- What criteria they likely used

They rarely say it clearly, but from my tests and from what @mikeappsreviewer posted, it looks like they lean on:

• AI detector scores from a mix of tools

• Perplexity and burstiness stats

• Styling quirks that look “AI like”

From my logs and comparisons:

• Short sentences with uniform length scored worse

• Heavy use of em dashes, colons, and very “neat” structure triggered flags

• Repeated patterns like “In this article, we will…” or “On the other hand” were a problem

If your content went through HIX Bypass and then hit GPTZero, GPTZero often still reads it as 100 percent AI. Their own internal checker then says “human” based on a different mix of detectors. You end up stuck in the middle.

- What documentation matters

Support ignores half the things people send. Here is what helped me get a straight answer:

Useful:

• Original text you submitted

• Output from HIX Bypass for that same text

• Screenshots of external detector results for both versions, especially GPTZero, ZeroGPT, and one or two others

• Timestamps and word counts, to show you tested within their limits

Less useful:

• Long essays about “I am a human writer”

• Vague complaints without hard numbers

• Only their internal report page, since they already know what it shows

If you want them to recheck something, send a simple, tight bundle:

“Here is the input, here is the output, here are three detector scores before and after. Here is the issue I see.”

Keep it short, under 300 words, or they tune out.

- Appeal or recon options

They do not advertise a formal appeals flow, but you still have a few angles.

What worked for me once:

• Ask for a “manual review of this batch” instead of saying “appeal”

• Point to a specific detector, for example “Your page shows Human for GPTZero, but GPTZero itself reports 100 percent AI. Please explain the mismatch.”

• Refer to their refund terms with dates and word counts, show you are inside the window and under the cap

If you want a reconsideration, spell out:

• Which part you want reprocessed

• Which detector you target

• What success would look like, for example “text that scores under 10 percent AI on GPTZero”

Do not send huge walls of text. Clear request, clear numbers.

- Where I disagree a bit with @mikeappsreviewer

They are right about the bad refund structure and the weird output issues. I had the same square bracket nonsense and broken sentences.

I am a bit less harsh on the “99.5% success” thing. On short social style posts under 150 words and with extra manual tweaks, I did get HIX Bypass output that slipped past some campus level checkers. It is not zero value, it is just very narrow value.

If you write long-form or client work, their terms and detection inconsistency hurt you more than they help.

- Alternative that fits this use case better

For anything where you want cleaner rewrites and more predictable detector behavior, Clever AI Humanizer did a better job for me. It produced text that read closer to a normal person, and it scored better on several detectors.

If you want something that helps with AI detection while keeping your text readable, look at smarter AI humanization with stronger detector evasion. I use it as a first pass, then do a manual edit on tone and structure.

- How to move forward on your current review

Practical steps:

• Collect your input, HIX output, and external detector screenshots

• Check you are under their refund word cap and within 3 days if that applies

• Open a ticket asking for “manual review of this content and explanation of detector mismatch”

• If they refuse or dodge, decide fast if you want to push for a refund before the window closes

If your goal is future safe use rather than a fight over this one review, I would stop relying on HIX Bypass as your main fix. Use a tool that behaves more consistently, then run your own checks and keep everything backed up with timestamps and scores.

They basically run this stuff like a black box, so your confusion is kinda normal.

From what I’ve seen and what @mikeappsreviewer and @waldgeist ran into, you can assume three buckets in their “review” even if they never spell it out:

-

Hidden scoring criteria

They likely mix together:- Results from a few AI detectors, weighted however they feel like

- Perplexity / burstiness stats

- Some pattern rules for “AI-y” phrasing and punctuation

Where I disagree a bit with the other two is that I don’t think they consistently apply those rules. Same text on two different days can get treated differently, which makes arguing about criteria kind of a joke. It is less “policy” and more “vibes plus whatever their backend says at that moment.”

-

What actually matters in your docs

Stuff that tends to get traction when you push back:- The exact original text you uploaded

- The exact text they output

- Screenshots from multiple external detectors, side by side, same timestamp

- Your account info plus word counts so you can prove you did not blow past their usage or refund triggers

I would skip the emotional essay about being human, skip long narratives, and skip attaching twenty PDFs. One clean message with clear evidence works way better than a wall of text. They are not reading your life story.

-

Appeal / recon in practice

There is no real “appeals” pipeline, but you can still try to corner them a bit:- Ask for a technical explanation of why their internal detectors say one thing while GPTZero or others say the opposite

- Ask for a one time manual review of that specific batch, not your entire account

- If you are inside 3 days and under their tiny word threshold, explicitly reference that and tie it to either a refund or a reprocess

The key is to be very specific. Something like:

- “This 800 word article, submitted on [date], was processed once. Your report says ‘human’ for detector X, but detector X on its own site says 98 percent AI. Please clarify or reprocess.”

You are not guaranteed a recon, but vague “I’m confused, please check” messages almost never go anywhere.

-

Where to go from here

Personally, I treat HIX as unreliable for anything important. Inconsistent detection, weird writing artifacts, and the whole refund/word-cap trap is just not worth the headache. If your real goal is content that reads naturally and scores lower across different detectors, Clever AI Humanizer has been a lot more predictable in real use. I still run manual edits after it, but it does not break sentences or throw in random bracket junk as often. -

Side note on that Reddit topic

Your “Best AI Humanizer Review on Reddit” line could be turned into something more click friendly like

detailed community reviews of the most reliable AI humanizers.

That kind of phrasing sounds more natural and pulls in people actually looking to compare tools, including things like Clever AI Humanizer, instead of just chasing whatever HIX claims on its landing page.

Bottom line, for this specific review: gather your input, their output, and three detector screenshots, then send a short, targeted message asking what failed and what they are willing to redo. For anything going forward, I would not build a workflow around their “99.5 percent” promise, because in real tests that number looks more like a marketing fantasy than a reliable metric.

Here is what I would add, without rehashing what has already been said.

1. What HIX is probably doing behind the curtain

Others pointed to detectors and perplexity. I agree, but I suspect something slightly different:

- They seem to weight “structure” more than people think. Paragraph rhythm, intro / outro symmetry, and predictable transitions can quietly tank a piece, even if wording looks varied.

- I have seen near identical content where only the headline style was changed and the review result flipped, which suggests they are not using a stable, frozen rule set. It feels like they retrain or tweak often, so you can never truly “learn” their system.

So instead of trying to reverse engineer criteria, I would treat HIX reviews as noisy, not authoritative. That is the real trap: assuming a failed HIX bypass equals “this content is definitely AI” when it often just means “this content tripped our current flavor of rules.”

2. Documentation angle that almost nobody tries

What @waldgeist and @mikeappsreviewer suggested about sending input/output pairs is spot on. One extra layer you can add:

- Save version-controlled drafts that clearly show your manual edits over time. Tools like simple diff viewers or even tracked changes in a doc can demonstrate non-AI-like revision patterns.

- When you present your case, show: “Here is the raw draft, here is the mid-edit version, here is final, here is what HIX saw.” That timeline looks very different from a single-shot AI generation.

Some support teams react better to a clear editing trail than to detector screenshots alone, since it hints at genuine workflow rather than output shopping.

3. About appeals and recons

I slightly disagree with the idea that you should frame everything only in terms of detectors like GPTZero. That can backfire because their safest move is to say “external tools vary; we rely on ours.” Instead:

- Ask for clarification on their internal thresholds for “human” versus “AI influenced.” Even if they will not give numbers, forcing them to talk about thresholds often leads to more specific responses.

- Phrase one of your questions as “What change to this content would have led to a pass in your system?” You might get hints like “shorter sections” or “higher lexical diversity” which at least tells you how they are looking at things.

No guarantee, but it sometimes turns a generic support reply into a more useful technical one.

4. Clever AI Humanizer in this picture

Since you mentioned being confused about criteria and outcomes, the tool you pick matters less than how predictable it behaves.

Pros I have seen with Clever AI Humanizer

- Output tends to read closer to a natural draft, which makes manual polishing much easier.

- Scores across a spread of detectors are more consistent. You may still get flagged in strict systems, but you see fewer wild contradictions from one tool to another.

- It usually avoids the obvious artifacts others noted in HIX, like broken sentences or unexplained brackets, so you spend less time fixing mechanical issues.

Cons worth keeping in mind

- It is not a magic “undetectable” button. Heavier institutional systems or custom campus detectors can still flag you if you do not manually revise tone and structure.

- On very niche or technical topics, the rewriting can flatten nuance, so you have to reinsert domain specific phrasing.

- If you push it to over-humanize, the style can drift into slightly fluffy or bloggy territory, which might not fit strict academic or corporate style guides.

Net result: I would treat Clever AI Humanizer as a first pass for readability and more reliable detector behavior, then do a real human edit, not as a one click bypass solution.

5. How your approach can differ from others in this thread

You already have solid tactical advice from @waldgeist, @sognonotturno and @mikeappsreviewer. To complement that, I would adjust strategy like this:

- Stop chasing a single tool’s “pass.” Treat HIX, GPTZero, and others as signals, not judges.

- Build your own small checklist for every piece:

- Variety in sentence length and structure.

- Topic appropriate voice instead of generic “blog tutorial” tone.

- Clear evidence of manual revision, not just one smooth auto rewrite.

- Use one humanizer such as Clever AI Humanizer for the heavy lifting, then write at least one full paragraph from scratch and rewrite another by hand. Those two chunks of clearly non-AI text can shift the overall profile more than people expect.

6. For your current HIX bypass review mess

If you are deciding what to do next:

- Do a quick side by side: your original, HIX output, Clever AI Humanizer output, and a lightly self edited version.

- Run those through two or three detectors and screenshot everything.

- When you contact HIX, focus less on “prove I am human” and more on “explain this inconsistency between your display and these concurrent results, and clarify what change would alter the outcome.”

If they stay vague or dismissive, take that as a data point about their reliability rather than a verdict on your writing. The goal is not to beat one opaque system. It is to end up with content that survives a mix of checks and still reads like something you are willing to put your name on.