I’ve started relying on AI tools to read or generate product reviews before I buy anything, but now I’m worried they might be biased, outdated, or even hallucinating details. I don’t want to waste money on bad products, but I also don’t have time to research everything manually. How can I safely use AI-generated reviews to make smarter buying decisions, and what red flags or best practices should I follow so I don’t get misled?

Walter Writes AI – my short, messy review

I tested Walter Writes AI because I wanted something to run text through before sending it to a client that still runs AI detectors on everything. The results were all over the map.

I only had the free plan, which locks you into the “Simple” mode. The site says paid users get “Standard” and “Enhanced” levels that do a better job against detectors, but I did not pay to test those.

Here is what I saw.

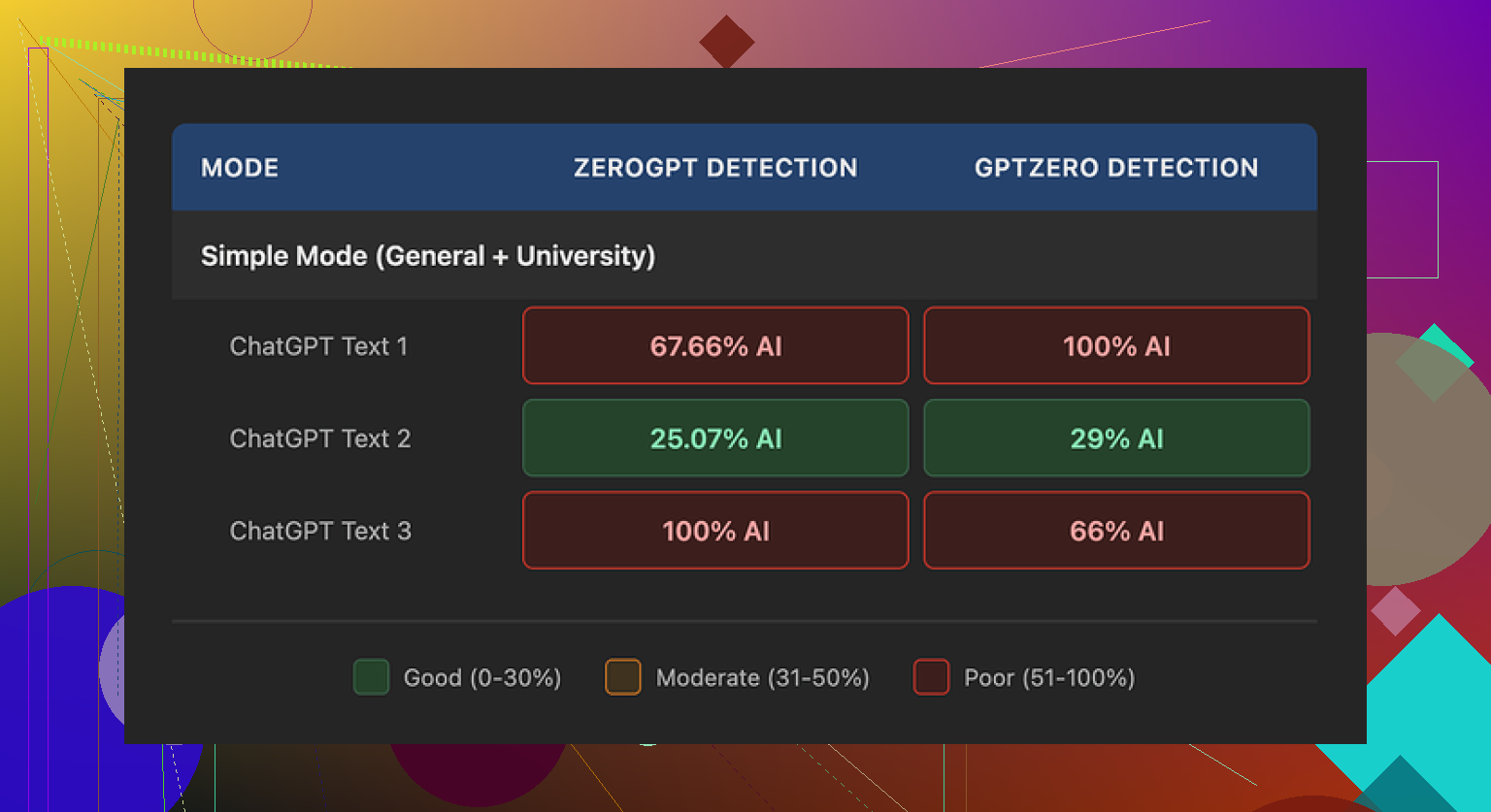

Score results on detectors

I pushed three different samples through Walter, then checked them on a couple of detectors.

Link to the tool:

Sample 1

GPTZero said 29% “AI”

ZeroGPT said 25% “AI”

For a free humanizer tier, that was not bad at all. Most free tools I have tried get flagged way higher, sometimes over 80% on both sites.

Sample 2

One of the detectors went straight to 100% AI.

The other one was high enough that I would not send it to a picky client.

Sample 3

Same story as sample 2. One detector showed 100% AI, instant fail.

So, one sample looked decent, the other two looked like I had not changed anything. That kind of inconsistency makes it hard to trust for anything serious.

Writing quirks I kept seeing

The bigger problem for me was how the text felt.

A few patterns showed up over and over:

-

Weird semicolon use

It kept throwing semicolons into places where a normal person would use commas or just split it into two sentences.

Example pattern:

“Climate change is a serious issue; it affects people worldwide; today, we see the results; today, communities struggle; today, action is needed.”

That sort of thing. It reads stiff and off. -

Repeated words in a tight space

In one sample, it used the word “today” four times inside three sentences. I did not push it with strange prompts either. It feels like some baked-in phrase pattern. -

Bracket spam

It leaned hard on parenthetical examples like “(e.g., storms, droughts)” and similar structures.

That type of repeated pattern screams “AI output” to anyone who reads a lot of this stuff, and detectors tend to pick up on that too.

These quirks made the text harder to pass off as normal writing, even when the scores were lower.

Pricing and limits

Here is what the pricing looked like when I checked it:

Starter plan

8 dollars per month if you pay yearly

30,000 words per month

Unlimited plan

26 dollars per month

Still caps each individual submission at 2,000 words, so you need to chunk longer pieces

Free tier

Gives you 300 words total to test, not 300 per day or per month. You burn through that in a few runs.

So if you need to run longer documents, you will end up breaking them into pieces, which makes the workflow slower. Also, if you care about consistency of tone, chopping content into many 2,000 word segments can cause jumps in style.

Refund policy and data stuff

This part bothered me more than the mediocre output.

The refund wording on the site had heavy language about chargebacks, with threats of legal action if you dispute a charge. It reads aggressive for a small subscription tool.

On top of that, the way they describe data retention around submitted text felt vague. I did not see a clear, plain statement that they delete input or avoid reusing it. If you work with anything sensitive, that matters.

What I ended up using instead

While testing different tools, I kept going back to Clever AI Humanizer. For my use, it produced more natural output on average and did not ask for payment.

Link to the main site:

They also have some walk-throughs and user posts linked here, which helped:

Humanize AI tutorial on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1l7aj60/humanize_ai/

Clever AI Humanizer review on Reddit

https://www.reddit.com/r/DataRecoveryHelp/comments/1ptugsf/clever_ai_humanizer_review/

Video review on YouTube

If you write a lot and your clients or teachers still run content through GPTZero or ZeroGPT, my takeaway is this:

Walter’s free mode is alright for experimenting and sometimes gives lower scores than other free tools, but the writing tics and inconsistent detection results made it hard for me to trust for anything important. I ended up parking it and sticking with tools that feel less robotic and do not hide better modes behind a paywall.

Short answer. Treat AI product reviews like one noisy data point, not your main filter.

Here is what I’d do if you want to use AI but avoid wasting money.

- Use AI to summarize, not to “decide”

Ask the AI to:

• Summarize pros and cons from existing user reviews on Amazon, Walmart, Best Buy, Reddit, etc.

• Pull out patterns like “many users complain about battery” or “most praise build quality”.

Do not let it hallucinate. Always say something like:

“Only summarize what real users say from known sources. If info is missing, say you do not know.”

If it starts talking about features that are not in the product page, treat that as a red flag.

- Force it to show sources

Ask it:

“List the exact review quotes you based this on, and where they are from.”

Then you read those quotes yourself.

If it gives vague paraphrases and no concrete quotes, ignore the output.

- Lock it to a time window

Your “outdated” worry is valid.

Say:

“Only use reviews or info from 2023 and 2024.”

If it still talks about things like “recently released” for a 2019 product, that tells you it is guessing.

- Use AI to compare, not to hype

Have it do structured comparisons:

“Make a table with these fields: price, warranty length, common issues users mention, return rate if available, noise level, etc.”

You check each field against:

• Official product page

• Top reviews (sorted by “most helpful” or “most recent”)

• One or two independent review sites

If anything looks off, you know where the hallucination is.

- Balance star ratings with text analysis

Quick manual method:

• Sort by “most recent” and scan 10 to 20 reviews.

• Look for repeated issues, not one‑off rants.

• Pay attention to 3‑star reviews, they often give the most balanced info.

Then, you can feed those specific reviews to an AI and ask:

“Summarize only these 20 reviews. Do not invent anything not present in this text.”

Copy paste chunks in. This avoids the model roaming the web in a vague way.

- Deal with bias

AI models tend to:

• Favor popular brands.

• Repeat marketing language.

• Smooth over rare but serious problems.

So add a prompt like:

“List 5 serious drawbacks even if reviewers mention them only a few times. Prioritize safety or failure issues over convenience issues.”

Then ask the opposite:

“Now list 5 reasons a person should avoid buying this at all.”

You force it to look for negatives, not only positives.

- Price and return policy still matter more

Even with perfect AI analysis, things slip.

So before buying:

• Check return window and restocking fees.

• Check warranty terms.

• Prefer sellers with easy returns for unknown brands.

A good return policy protects you more than any AI summary.

- About Walter Writes AI and similar tools

For what you described, I would not lean on Walter Writes AI much. It is tuned more for “humanizing” text than for accurate product research.

As @mikeappsreviewer said, it has quirks and inconsistency on detection, and I would add it is also not ideal for factual reliability when you care about money spent.

If you want to run AI text through something before trusting it, Clever AI Humanizer is more useful for smoothing obvious AI patterns so you can share or quote text without it screaming “AI”.

That said, a humanized hallucination is still a hallucination. Use it for style, not for truth.

- Simple practical workflow

What I’d do for any non trivial purchase:

• Step 1: Skim product page and spec sheet yourself.

• Step 2: Manually scan 10 to 20 recent reviews on 2 sites.

• Step 3: Paste those reviews into AI and ask for a strict summary with no extra claims.

• Step 4: Ask AI to write “reasons to buy” and “reasons to avoid” based only on those reviews.

• Step 5: Check price, warranty, returns. Then decide.

Once you lock AI into “summarize this fixed text” instead of “go find stuff and opine”, the bias and hallucination risk drops a lot.

You’re not crazy to be worried. Using AI to “pre‑shop” products is like asking a smart but compulsive liar for advice: useful, if you keep it on a short leash.

Couple thoughts that don’t just repeat what @mikeappsreviewer and @sonhadordobosque already said:

1. Stop asking AI “Is this product good?”

That kind of open question is exactly where hallucinations creep in. It’ll start inventing features, quote “users” that never existed, or act like it knows return rates when it doesn’t.

Instead, ask narrow, almost boring stuff like:

- “Given this exact spec sheet and this price, what kind of user is this best for?”

- “What tradeoffs does a 250‑nit, 60 Hz, 8 GB RAM laptop usually have compared to 400‑nit, 120 Hz, 16 GB?”

So you’re using AI to reason about known facts, not invent new ones.

2. Treat AI like a skeptical friend, not a cheerleader

Most people prompt like:

“Tell me why this is a great buy.”

You’ll get hype. Try the opposite:

- “Assume I’m about to waste money on this. From what’s public on the page, what looks like a trap or corner cut?”

- “If this product fails, what is the most likely point of failure, based on its specs and design?”

This pushes the model to critique instead of market.

3. Don’t rely on it to be “current” unless you fix the timeframe yourself

Even if you say “use 2023–2024,” it might still echo older review patterns. So flip the logic:

- You manually grab 5–10 recent reviews from Amazon / Best Buy / Reddit (last 3–6 months)

- Paste them in and say:

“Only use the text I pasted. Ignore your training data. What changed recently compared to older reviews of similar products in general?”

This way you’re using AI to compare these specific people vs generic expectations. If it drifts into “other users say…” you know it’s cheating.

4. Use AI to reason about your priorities, not “average” buyers

Detectors, humanizers, product review bots, all of it tends to converge on some generic “average” user. That’s useless if, say, you care more about noise level than raw power.

Give it a weighting:

“On a 0–10 scale, I care about:

Price 5 / Durability 9 / Noise 10 / Looks 2 / Brand 1.

Using only the text and specs below, score this product for me and explain each score.”

Now it has to argue its case instead of just saying “lots of users like X.” You’re not just asking “is it good,” you’re asking “is it good for my weird priorities.”

5. Shortcut to spotting AI‑tainted or fake reviews

You’re worried about AI hallucinations; I’d be equally worried about the reviews themselves being AI‑written. Quick hack:

Ask the model:

“From these 20 reviews, flag any that look templated, suspiciously generic, or likely AI/fake. Explain why, but do not remove them, just tag them.”

You’re basically using one AI to sniff out the writing tics of another. Not perfect, but it surfaces copy‑pasted patterns, sudden tone shifts, same phrasing across “different” users, etc. Then you can weight those reviews less.

6. Where Walter Writes AI fits in (and where it really doesn’t)

Walter Writes AI, from what you and @mikeappsreviewer described, is built to humanize text, not to be a reliable shopping assistant. The semicolon spam, repetition, and style quirks they mentioned are exactly the kind of thing you don’t want anywhere near factual buying decisions. I wouldn’t rely on it to generate or “improve” product reviews; that’s just polishing guesses.

If you need to clean up AI‑generated summaries so they don’t scream “robot” before you share them (like prepping something for a picky boss or client), something like Clever AI Humanizer is actually a better fit: use it purely as a style filter after you’ve already checked the facts. It can make the text sound more natural without pretending to be an expert on which TV to buy. Just remember: humanized nonsense is still nonsense if you didn’t verify it first.

7. When to ignore AI entirely

There are situations where I’d say: don’t even bother with AI for the decision:

- Safety‑critical stuff: car seats, climbing gear, medical devices, etc

- Super niche / technical gear where 2–3 real expert reviews exist and that’s it

- Very new products where there simply aren’t enough real users yet

In those cases, it’s faster and safer to read 2 solid human reviews and the manual than to argue with a model about “confidence.”

If you keep AI boxed into “reason about what I paste” and “help me see tradeoffs for my priorities,” it becomes a decent tool instead of a hallucination slot machine. The moment you let it roam free to “tell you if something is good,” that’s when you start gambling with your wallet.