I’ve been considering using WriteHuman AI for content writing, but I’ve seen mixed opinions online and I’m not sure if it’s worth the time or money. Can anyone share real experiences with its accuracy, tone, and reliability, and whether it actually helps produce better human-like content?

WriteHuman AI review, from someone who tried to make it work and got burned a bit

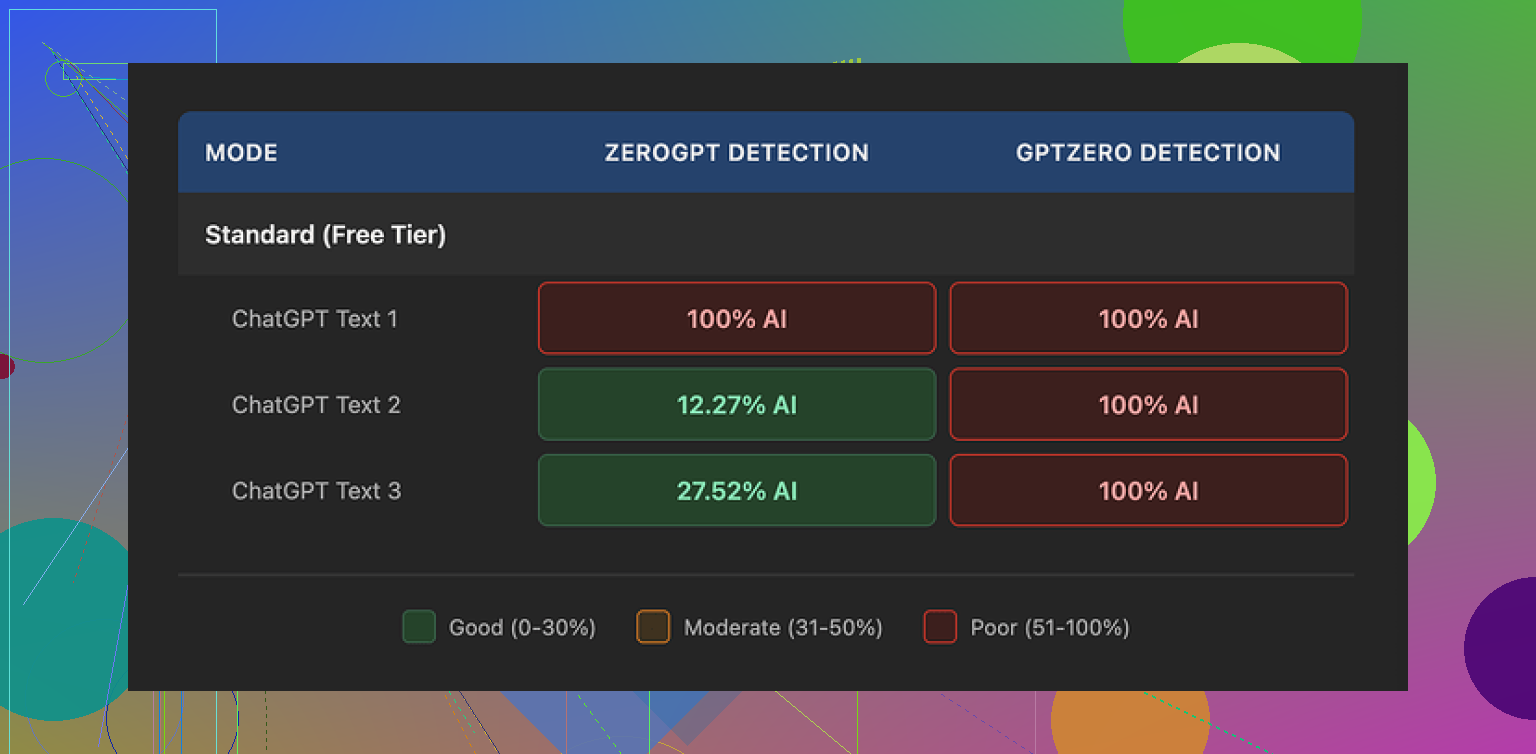

WriteHuman keeps name-dropping GPTZero in its marketing, so I decided to see if it actually holds up. Short version of my experience: it did not.

I took three different AI-written samples, ran them through WriteHuman, then checked each result on GPTZero.

All three came back as 100% AI.

Not “borderline”, not “mixed”, straight 100% AI on the same detector they advertise against. That was the first red flag for me.

ZeroGPT reacted differently but not in a way that gave me much confidence:

- First sample: 100% AI

- Second sample: about 12% AI

- Third sample: around 28% AI

So the tool sometimes knocked the number down, sometimes did nothing, and the behavior felt random. If you need consistent evasion, that randomness becomes a problem fast.

Here is the screenshot they like to show off:

What the writing felt like

I expected at least some improvement in tone and flow. Instead, what I saw looked more like a rough content spinner that tries to fake “human-ness” by throwing in weird quirks.

Two things stood out.

-

Abrupt tone shifts

In one paragraph the output sounded formal and slightly stiff, then halfway through it switched to a more casual style with odd word choices. Think: “academic blog post suddenly turning into a Reddit comment.” That type of jump will not pass in real writing contexts. Your teacher, editor, or manager will catch it before any detector does. -

Sloppy typo baked into the output

One of my runs had “shfits” instead of “shifts.” That is the kind of typo you might make while typing fast, but when it shows up in machine output marketed as “refined,” it feels like the system is forcing errors to look human. That might shave off a few percentage points on some detectors, but then you are stuck manually fixing quality issues anyway.

I get the logic: messy text looks more human. But when it gets in the way of clarity and readability, you end up rewriting half of it. At that point you might as well edit your own AI output manually.

More screenshots:

Pricing and terms, where it lost me

This part bothered me more than the detection results.

Pricing

- Starts at 12 dollars per month on an annual plan for the Basic tier

- That Basic plan gives you 80 requests per month

So you lock into a subscription, pay a decent chunk, and still have a hard usage cap. For a tool that did not perform well in my tests, this felt steep.

They mention an “Enhanced Model” and extra tone options on paid plans. I did not see enough in the default behavior to want to pay more to try that. Maybe it helps a bit, but the baseline was weak.

Refunds

Their terms are blunt: no refunds.

If it does not help you bypass AI detectors in your situation, that is on you. There is no safety net, no “if it fails for your use case we will work with you” type policy. You pay, you roll the dice.

Detection guarantee

They also admit in their own terms that they cannot guarantee bypass of any detector. That part is fair from a legal side, but if that is combined with:

- weak real-world results

- a no-refund policy

- and a subscription model

you need to be comfortable losing that money.

Data use

This is the part that will be a dealbreaker for a lot of people.

Anything you submit through WriteHuman is licensed for AI training. That means your input text can be used to further train their systems.

So if you work with:

- client documents

- internal reports

- unpublished drafts

- school work you do not want in someone’s training set

then your only safe move is to stay away from the service entirely. There is no “opt out” button described in the piece I read. It is baked into their terms.

What worked better for me

For comparison, I tried Clever AI Humanizer from this thread:

My experience there:

- Detection scores were lower across multiple tests

- No upfront paywall

- No stress about wasting a subscription slot every time you want to experiment

The detection drop was not perfect, but it at least moved the needle in a clear way for the same type of AI-generated input. For me that was more useful than hoping a paid tool might eventually perform well.

If you are trying to decide where to put your time and money

Here is how I would break it down from my own runs:

Use WriteHuman if:

- You are aware it might still score as AI on tools like GPTZero

- You are okay with no refunds

- You do not mind your text being used for AI training

- You treat it as an experiment, not a guaranteed solution

Skip WriteHuman if:

- You need consistent detector evasion for school or work

- You care about keeping your text out of training datasets

- You do not want to pay 12 dollars a month for something that might change nothing in your detection scores

- You hate sudden tone shifts and fixing random typos in “humanized” output

From hands-on testing, I ended up favoring Clever AI Humanizer. It gave me better results on detection tools and did not ask me to pull out a credit card to start testing.

I used WriteHuman for about two weeks on client blog posts and a couple of school-style essays. My take lines up with some of what @mikeappsreviewer said, but I had a few different results too.

Accuracy and tone

I fed it GPT style drafts and asked for a more “human” tone. It did change the wording and sentence lengths. Detection scores on GPTZero in my case dropped from 100 percent AI to around 60–80 percent AI on average, not to “looks human” levels. On ZeroGPT I saw drops from 90–100 percent AI to 20–50 percent AI on some runs, other runs barely moved. So it helps a bit, but it is not consistent.

The tone often felt off. You get a mix of formal and casual in the same paragraph. I had weird phrases like “on top of this matter” next to “on the flip side, you know”. I also saw small typos and odd commas that look forced, like it is trying to fake human mistakes. You will need to edit every output if you care about clean copy.

Reliability

For content writing work, it did not save time. I still had to

- Run the text through WriteHuman

- Check detectors

- Manually rewrite awkward bits and fix typos

By the time I finished, I could have rewritten the AI draft myself. For school use, depending only on this for detector evasion feels risky. One professor used GPTZero, the text still flagged “likely AI” even after WriteHuman.

Pricing and policy

The hard cap on requests plus subscription felt tight. I burned through the monthly quota fast while testing. The no refund policy is rough if you are experimenting. Also, the training usage of your inputs is a big deal if you handle client docs or anything under NDA. I stopped sending client stuff once I saw that part.

Where I slightly disagree with @mikeappsreviewer is on “zero value”. If your goal is to nudge some detectors down a bit and you already plan to edit heavily, it has some use. I would not treat it as a plug in, click once, done solution.

Alternatives

For my workflow, Clever AI Humanizer worked better. Lower scores on GPTZero and ZeroGPT more often, less wild tone shifts, and no paywall to start testing. I still edited the output, but I spent less time fixing awkward phrasing.

Practical recommendation

Use WriteHuman only if:

• You want to experiment and you are fine losing the sub fee.

• You do not send sensitive or client text.

• You accept that you still need to rewrite and check everything.

If your priority is detection scores plus keeping your time and budget under control, I would test Clever AI Humanizer first, compare a few samples across GPTZero and ZeroGPT, then decide based on your own numbers.

Tried it for a full month on client blog posts + a couple of longform guides, so here’s the short version: it’s “meh” if you care about both quality and detector risk.

On accuracy / detection:

Similar to what @mikeappsreviewer and @chasseurdetoiles saw, my GPTZero scores dropped a bit but not enough to feel safe. Stuff that started at “very likely AI” usually ended up still flagged “likely AI”. ZeroGPT was more forgiving, but results felt kinda lottery-like. Sometimes big drop, sometimes almost no change with very similar input. That unpredictability is the killer for anything serious.

Tone:

This was my biggest issue. I’d describe it as “AI, but trying to cosplay as a distracted human.” Mixed registers in the same paragraph, weird turns of phrase, and the occasional typo that felt intentionally inserted. You can fix it, sure, but you’ll end up editing almost every sentence. For pure content writing, I spent more time cleaning than if I had just taken the original AI draft and human-edited it myself.

Reliability / workflow:

If you’re hoping for:

- paste AI text

- click humanize

- lightly skim & ship

…it’s not that. It becomes: run text, test on detectors, re-run or heavily edit, test again. That loop gets old fast. For me it only “worked” when I treated it as a rough rewrite tool, not a detector solution, and even then the stylistic quirks annoyed me.

Where I slightly disagree with them: I don’t think it’s totally useless. If your bar is “nudge some scores down and force myself to rephrase things,” it does that. But as a paid, quota-limited service with no refunds and auto-training on your content, the tradeoff is rough.

If you’re just experimenting and not using sensitive docs, I’d honestly start with something like Clever AI Humanizer first. It gave me more consistent drops on detectors and less chaotic tone, and you can see if it works for your use case before committing money anywhere. Then, if you still feel curious, treat WriteHuman as a secondary tool, not your main fix.

I’m mostly in the same camp as @chasseurdetoiles, @techchizkid, and @mikeappsreviewer on WriteHuman, but I’ll add a slightly different angle.

The core issue isn’t just “scores don’t always drop enough.” It is that WriteHuman feels like it optimizes for detector noise instead of for readable, consistent voice. That is backwards if you care about clients, grades, or your own brand. When tone zigzags between semi‑academic and “Reddit reply,” humans notice faster than machines.

Where I do disagree a bit: I don’t think the random typos are always a bad idea. Mild imperfection can help on some detectors and can push you out of that super-uniform GPT rhythm. The problem is that WriteHuman lets those quirks leak into places that matter, like headings and opening sentences, so you still have to line edit everything.

On cost: the combination of quotas, subscription, no refunds, and training on your text is a pretty harsh stack for something you cannot rely on. If this were a one‑time low fee or had a generous trial, it would be easier to recommend as a niche tool.

Re Clever AI Humanizer:

Pros

- More consistent drops in detectors across multiple reports, not just one

- Less chaotic tone and fewer “what human talks like this” moments

- Easier to test without committing cash up front

- Works decently as a first-pass rephrasing tool before your own editing

Cons

- Still not a magic “paste and forget” solution for GPTZero‑style tools

- You will need to refine the voice if you care about a strong personal or brand style

- Overreliance can make your writing feel generically “smoothed out” if you do not customize later

If your priorities are:

- staying under the radar of basic detectors,

- not wasting time on a messy clean‑up pass, and

- avoiding tight quotas plus data‑training worries,

then treating Clever AI Humanizer as a helper and keeping WriteHuman as “maybe I’ll test it once if they change pricing or policies” is the more rational move.

Bottom line: neither tool replaces real revision. For actual safety and quality, you still need a human brain pass, especially if anyone serious is grading or paying for the work.